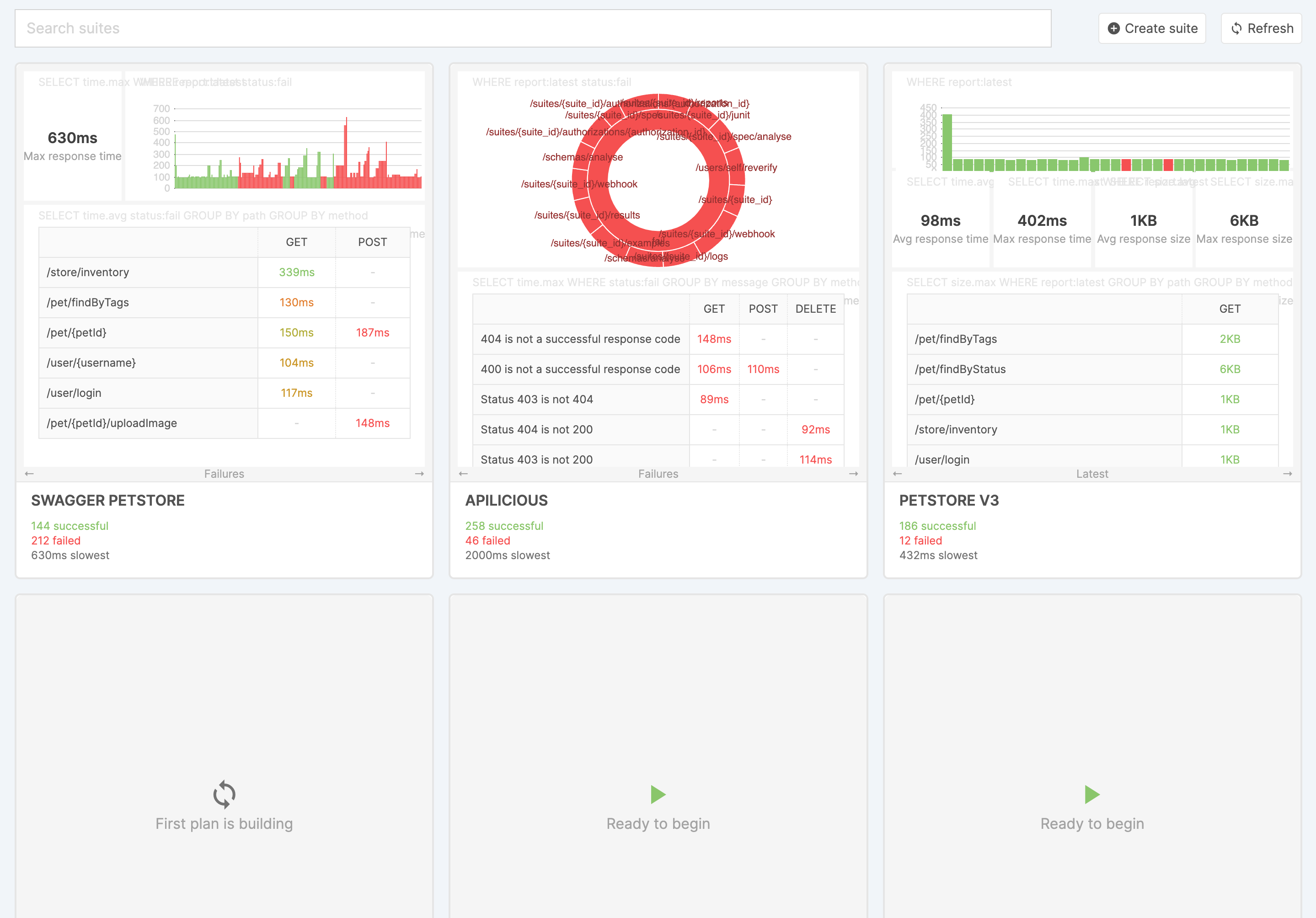

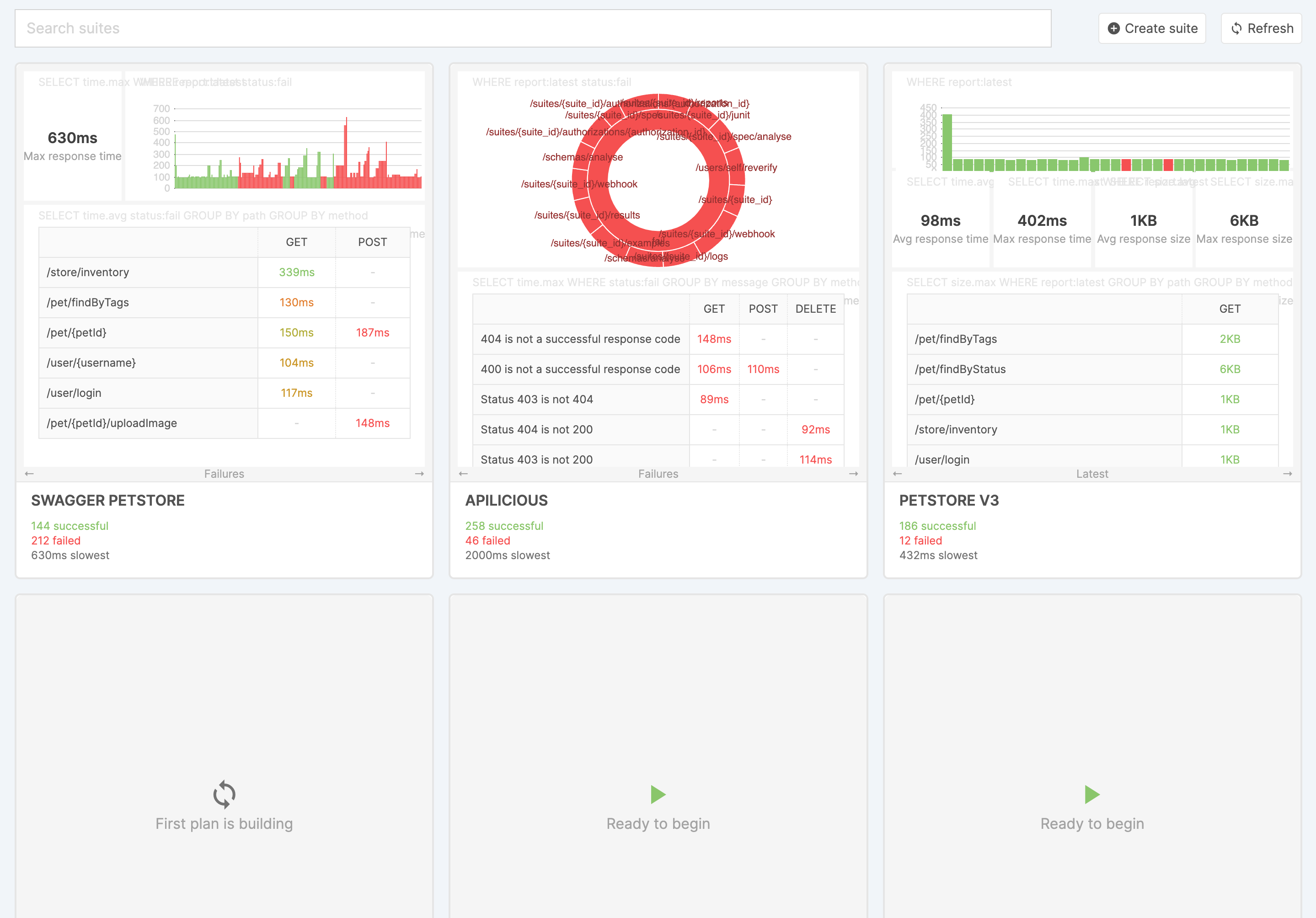

Suites

Clicking home at the top will always take you back to the suite list

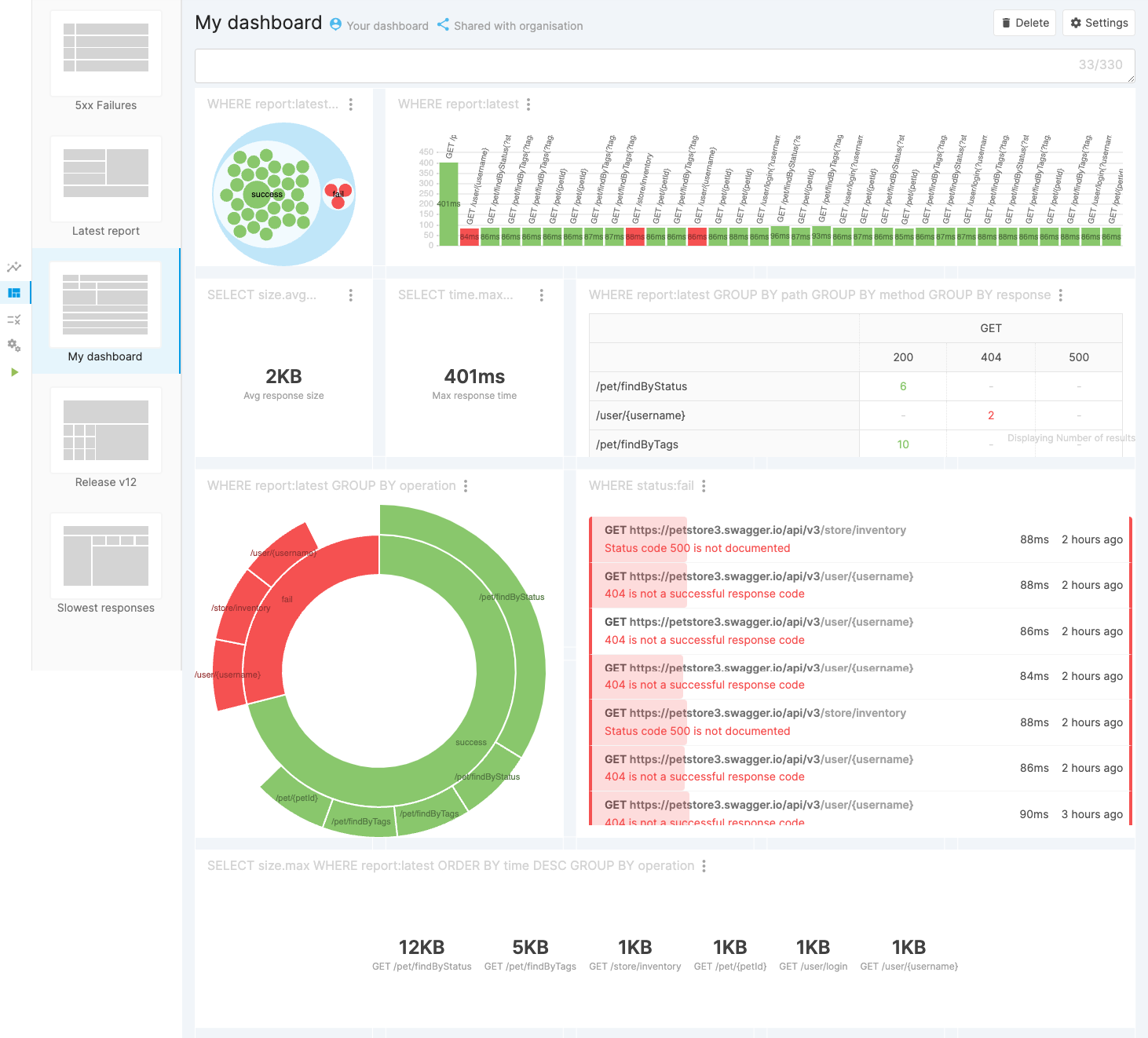

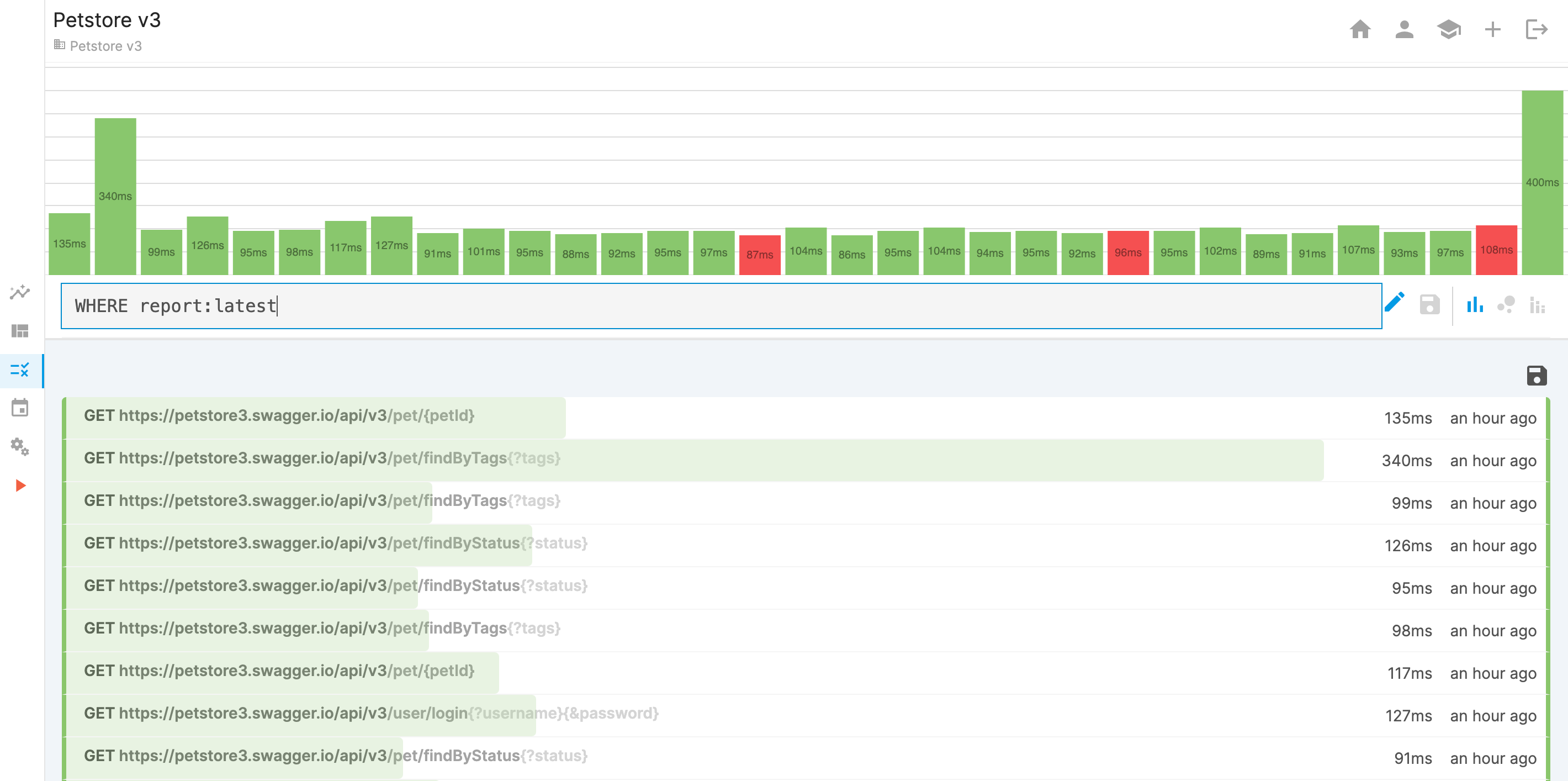

Suite: Latest

It summarises the latest known information, and lists the most recent test reports

Maintaining examples

If your specification changes you may need to update your examples. Using dynamic examples will help with this

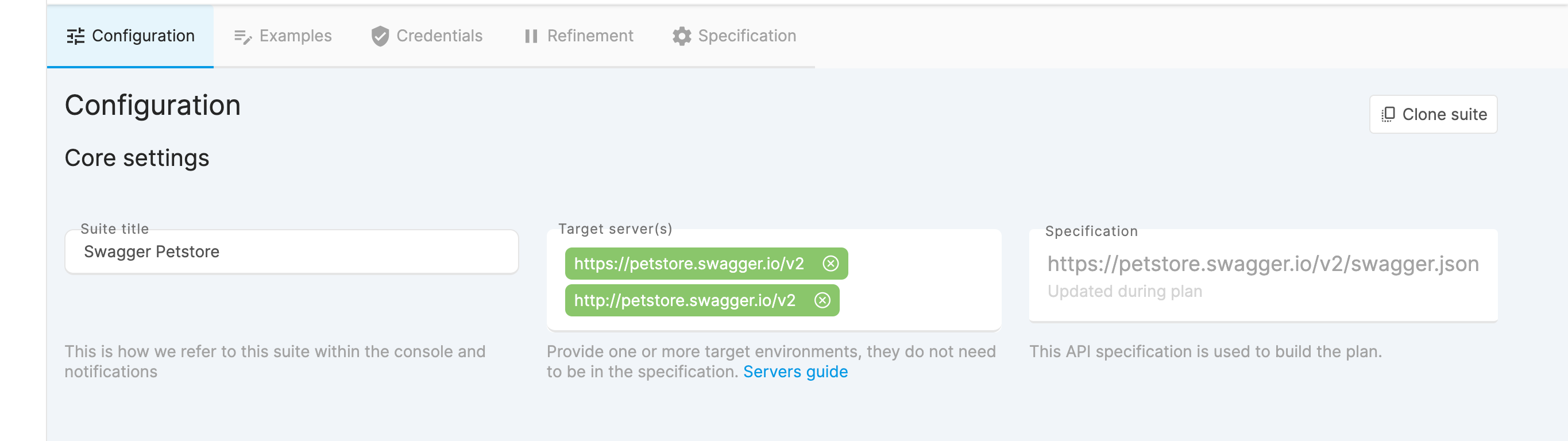

Automatic updates

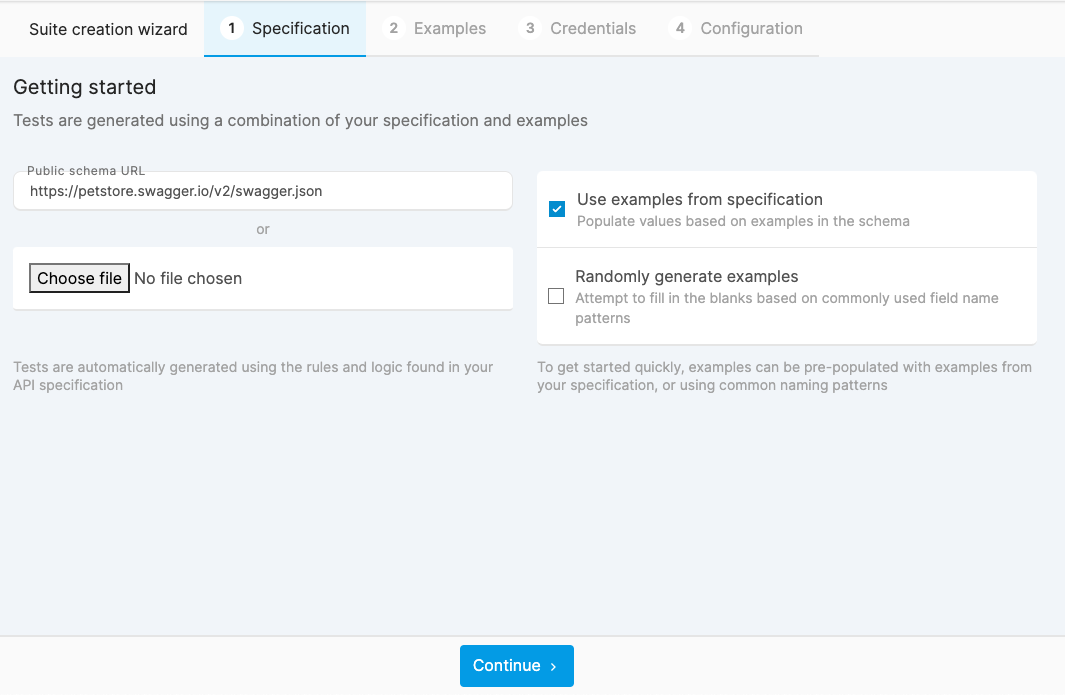

Using a public URL will update the specification every time the plan is rebuilt. If your API frequently remaps property names, examples must be updated.

Updating a suite

A test plan will sometimes be re-generated, the changes will take affect starting from the next report

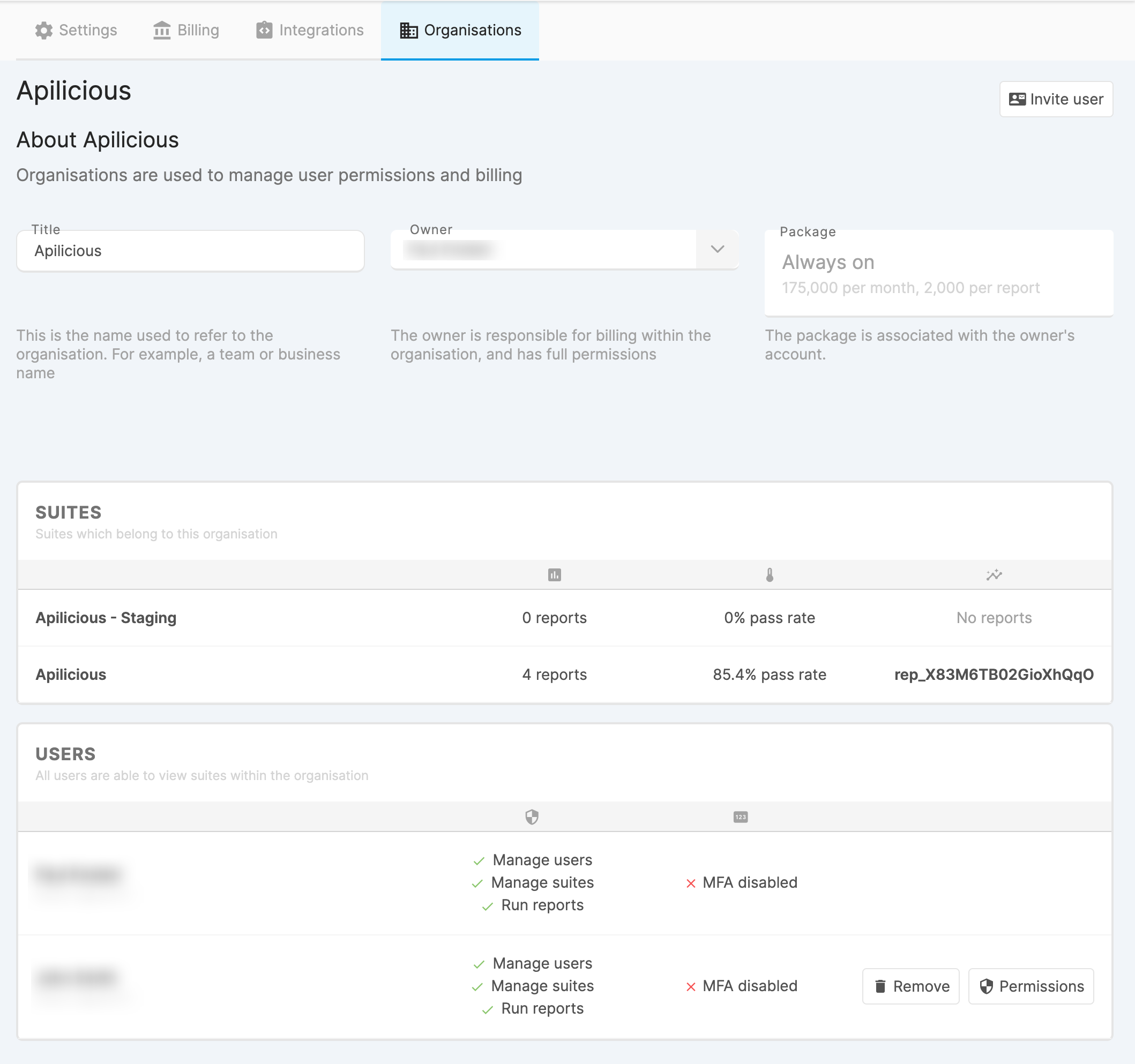

Authorized users only

Only users with the "Manage reports" permission can update the configuration. Some fields can only be updated by the owner.

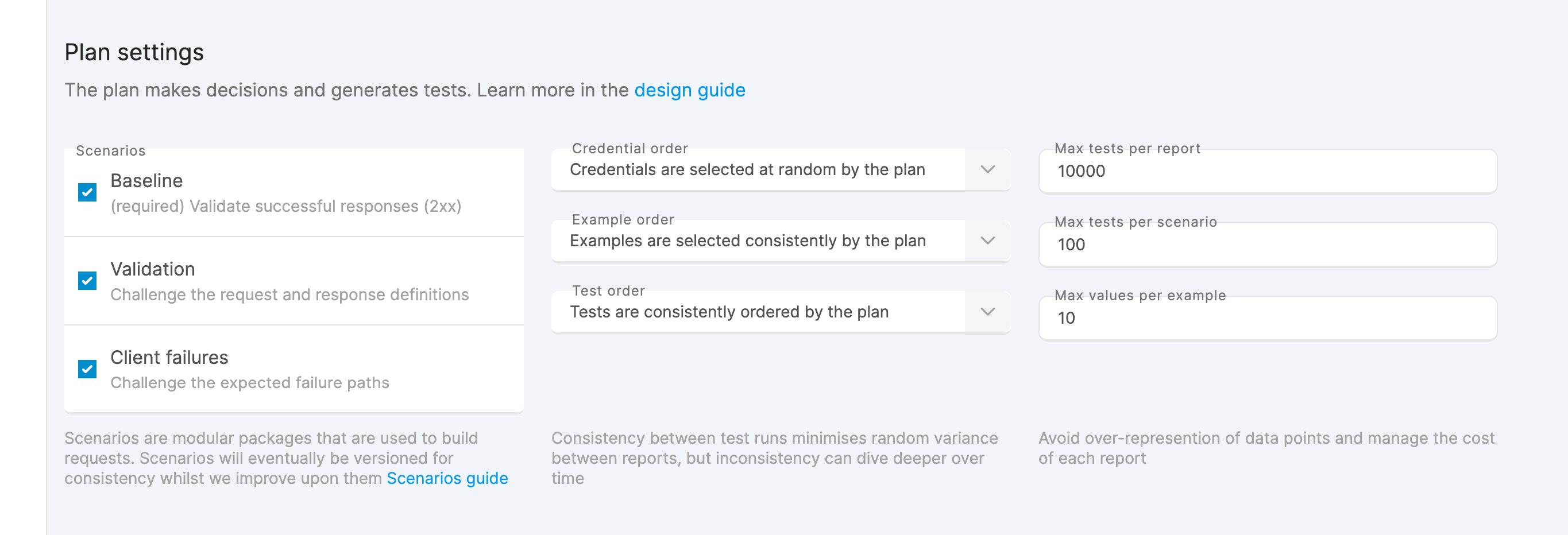

Consistent vs Random

Consistent tests will be to compare reports side-by-side. Randomisation will increase the chance of discovering issues

Consistent with low limits

Low limits in combination with consistent tests will reduce the liklihood of finding a failing test over time

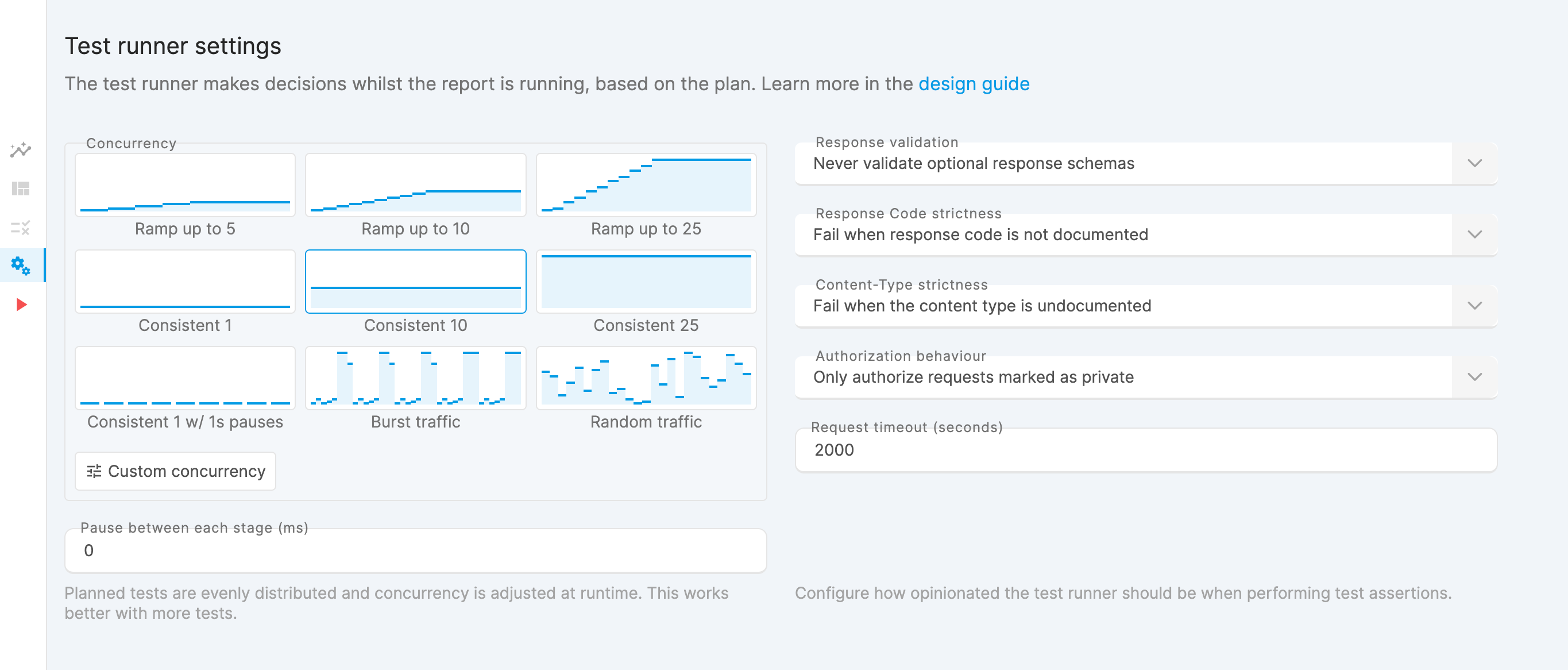

Request concurrency

Concurrency steps are evenly distributed across available tests. Smaller plans may not reach the maximum concurrency.

Concurrency limitations

The maximum concurrency will eventually be increased. If you would like increased concurrency please get in touch, and we will be able to help.

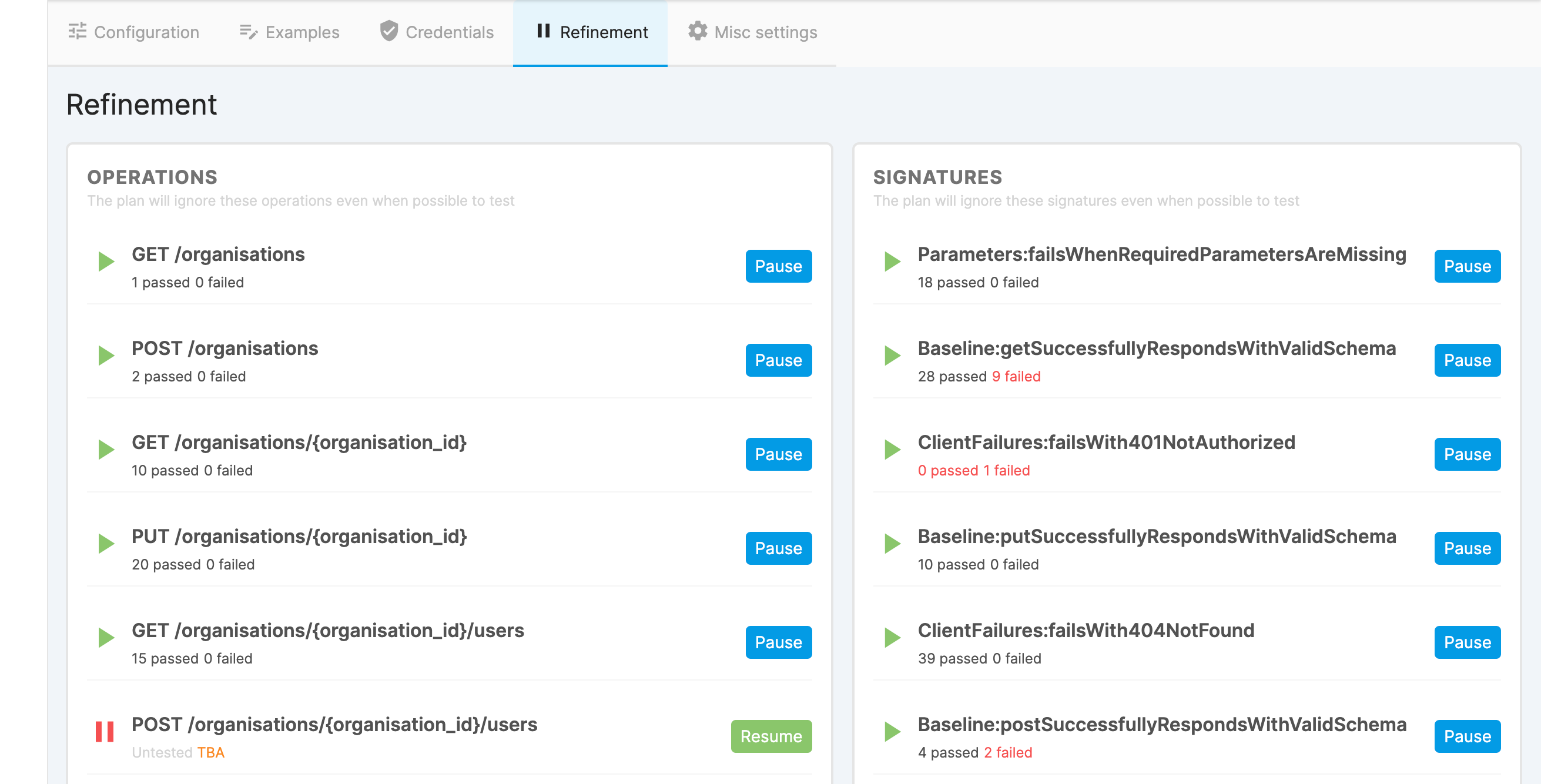

Refining failure sensitivity

If tests are still "incorrectly failing", you can use skips to refine your baseline. If you feel this is an issue with the test runner please get in touch

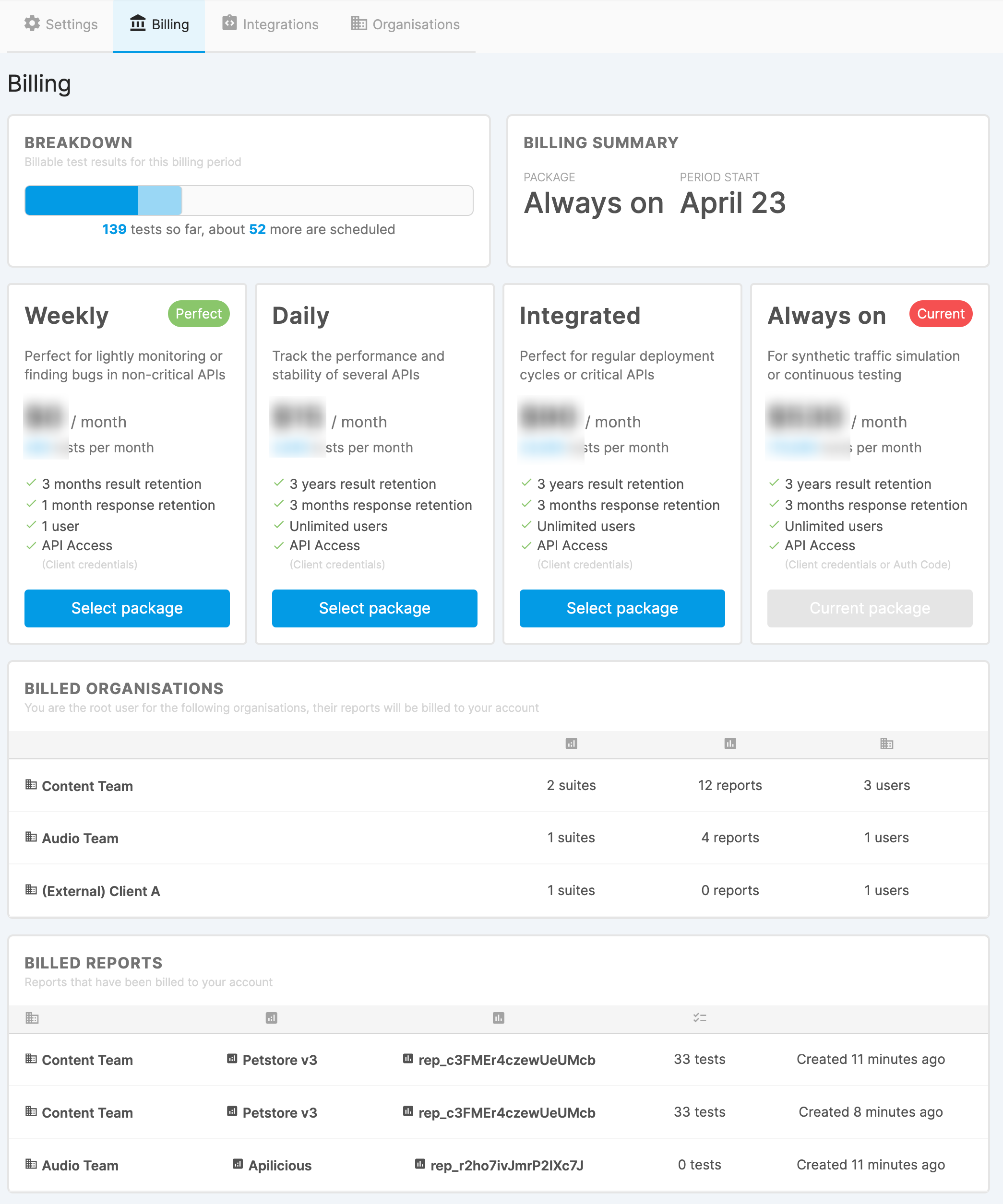

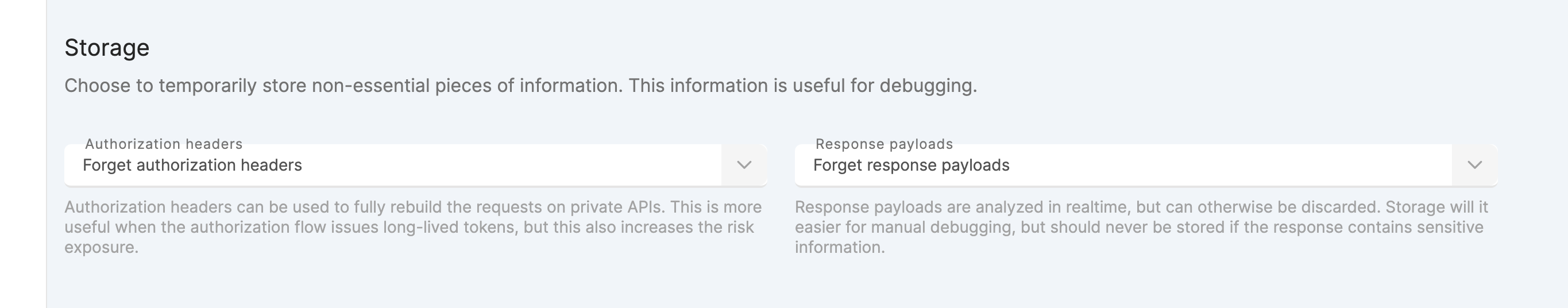

Result retention

The type of account you have determines how long test results are retained. Raw responses are held for up to three months, but the result itself exists for up to three years

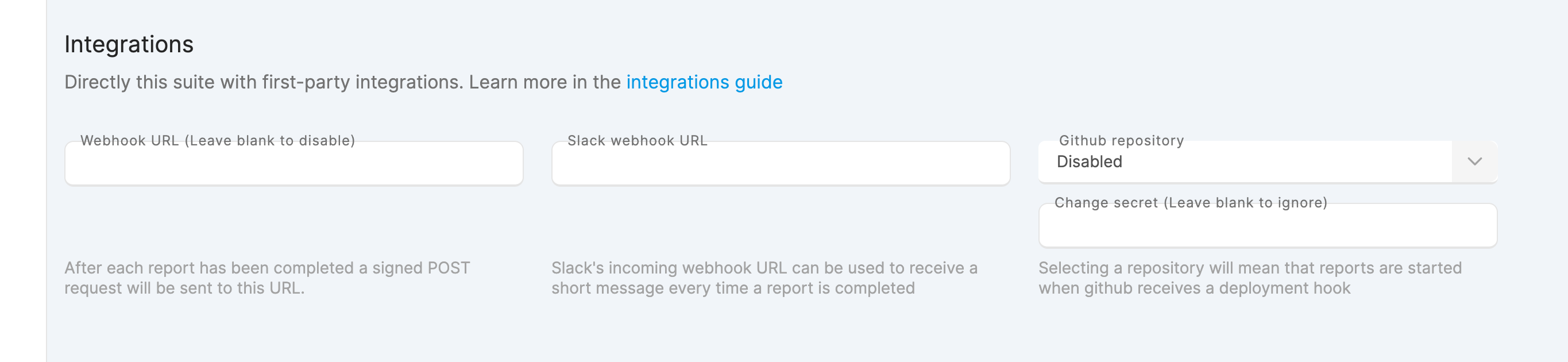

Outbound webhooks

Setting up automation

More information on setting up integrations can be found in the automation guide

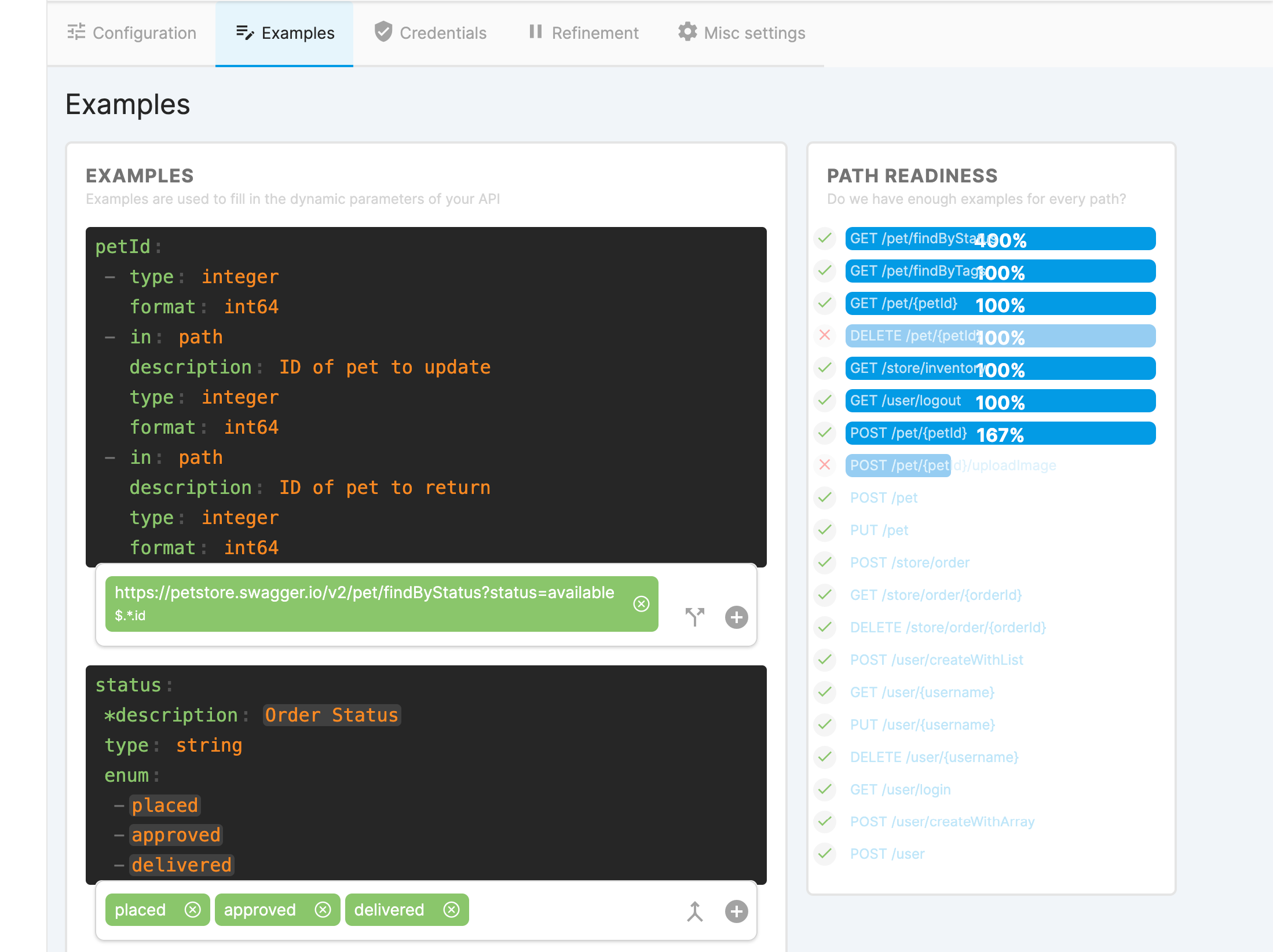

Updating Examples

Choosing examples

To avoid needing to skip tests, examples that the credentials are authorized to access should be used

Everything will be tested

The plan and test runner will consider every possible combination of request, use the path readiness section to skip any risky operations

It is what you make of it

The examples are built from your API specification. The more information you add to your specification, the easier the examples are to write

Advanced usage

Read the the examples guide to learn how to create dynamic examples, or build a custom datasource

User isolation

The test runner will test all possible operations. It is recommended that you create a user or client who cannot cause any problems by doing this.

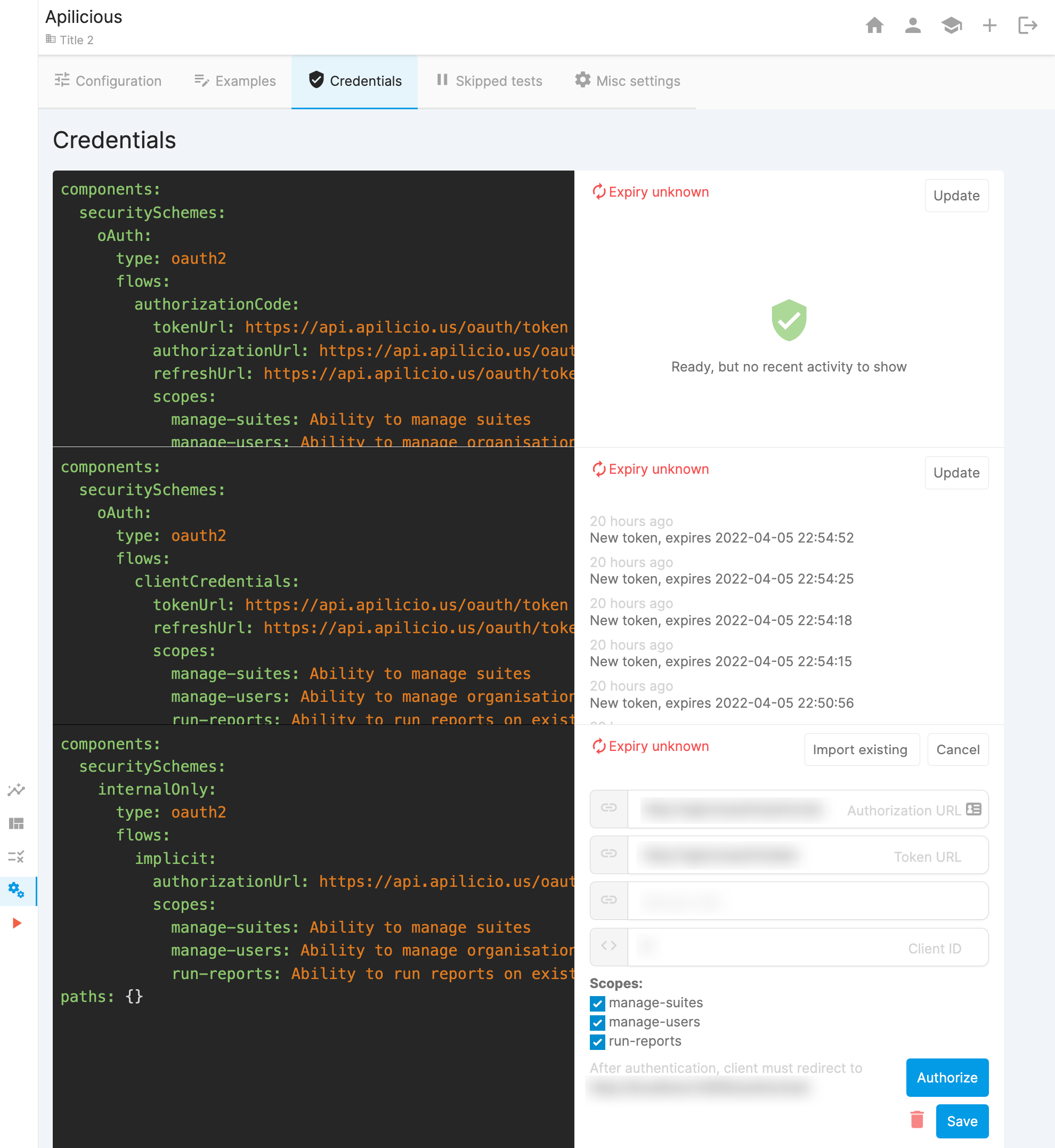

Keeping it simple

Unless you are specifically testing authentication flows, we recommend using a single (refreshable) auth flow or long-lived API Key/Bearer token

Credentials should be valid

Credentials are assumed to have full access to the provided examples and enabled operations (Skip some things if not possible)

Managing environments

The authorization server is configurable for each credential, providing flexibility when testing against different environments

Refining scope

Skipping tests can be used to refine the scope of test suites. For example you may want a test suite dedicated to a specific theme

Limiting damage

The test runner will test everything it can based on the information you provide, use skips to ensure it does not cause any harm

Credentials

The test runner assumes the credentials provided have access to everything. Use skips when this assumption is invalid

If you simply disagree

If you disagree with the outcome of any test you are welcome to skip it. If you let us know, we may improve failure sensitivity or update the test scenario in the next revision